Ideas aren’t getting harder to find and anyone who tells you otherwise is a coward and I will fight them

You can still discover stuff, I promise

I’ve worked with a lot of research assistants over the years, and at some point we inevitably have The Talk: should they get a PhD? I was recently having The Talk with one particularly distraught student, who was worried her ideas aren’t good enough. “Maybe I’m not cut out for it,” she told me, “or maybe all of the easy ideas have already been done.”

Like her, I’ve worried that I was born too late and all the low-hanging fruit was picked before I got here. Fifty years ago, you could get a million citations just for showing that people like their own team the best, that kids will do something that they watch other people do, or that people think other people agree with them. Everything obvious has been done, and now it’s up to poor schmucks like me to figure out the hard stuff.

Lots of people who think hard about the progress of science seem to come to the same conclusion:

All the low-hanging fruit has already been picked […] it’s almost inconceivable that it could ever be otherwise.

[I]deas naturally get harder to find over time, and we should expect art and science to keep slowing down by default.

The days when a doctoral student could be the sole author of four revolutionary papers while working full time as an assistant examiner at a patent office — as Einstein did in 1905 — are probably long gone. Natural sciences have become so big, and the knowledge base so complex and specialized, that much of the cutting-edge work these days tends to emerge from large, well-funded collaborative teams involving many contributors.

Everyone agrees: we must resign ourselves either to tweaking what came before or spending our lives descending deeper and deeper into the idea mines, searching for the few nuggets of originality left.

I once found this idea seductive. Now I find it outrageous. It’s not just because it’s wrong; it’s an affront to the human spirit. People only discover stuff when they think it’s worth trying, and there have been entire eras of human history where people didn’t think it was worth trying. A meme like “ideas are getting harder to find” could drive the desire to discover back into hiding again, fulfilling its own abominable prophecy.

Somebody needs to defend the belief that mere mortals can still discover useful truths, and it’s me. I’m here to be that somebody.

1. Bias check

If we’re going to entertain, say, defunding theoretical physics on the grounds that there’s just no more useful physics to do, we should at least ask: could any cognitive biases be at play here?

I can see two. First, all ideas seem obvious in retrospect. Heliocentrism, germ theory, and randomized controlled trials look like no-brainers once someone explains them to you, but they took people thousands of years to figure out. ("E = mc^2? That’s just three letters and a number!”)

Second, it’s always going to feel hard to think of new ideas. What should we do next in physics, biology, music, or film? Gosh, I don’t know! I’d have to think pretty hard, just like everybody before me, and I might not come up with anything, just like almost everybody before me.

So if past ideas seem obvious and future ideas seem obscure, it’s tempting to conclude we live at the inflection point where ideas suddenly get harder to find. And maybe we do. But we’d feel that way even if ideas weren’t getting harder to find, and that should make us a little skeptical.

2. We’ve been wrong about this before

If our ancestors also thought they were running out of ideas and were wrong, then we should be wary about thinking the same thing. Our ancestors did think that, and they were wrong.

For instance, physics was apparently about to end in the 1890s:

Max Planck later remembered a professor telling him that during this period, “the system as a whole stood there fairly secured, and theoretical physics approached visibly that degree of perfection which, for example, geometry has had already for centuries.”

The British scientist William Cecil Dampier recalled his apprenticeship at Cambridge in the 1890s: “It seemed as though the main framework had been put together once for all, and that little remained to be done but to measure physical constants to the increased accuracy represented by another decimal place.”

British physicist J. J. Thomson: “All that was left was to alter a decimal or two in some physical constant.”

American physicist Albert A. Michelson: “Our future discoveries must be looked for in the sixth place of decimals.”

Surgery was nearing perfection in 1873:

There cannot always be fresh fields for conquest by the knife. There must be portions of the human frame that will ever remain sacred from its intrusion – at least, in the surgeon’s hand. That we have nearly, if not quite, reached these final limits there can be little question.

Psychology was on track to wrap up before 1920, according to the behaviorist John Watson:

I believe we can write a psychology […] and never go back upon our definition: never use the terms consciousness, mental states, mind, content, introspectively verifiable, imagery, and the like. I believe that we can do it in a few years…

It was only going to take a couple of dudes and a summer to solve some of the fundamental problems in artificial intelligence:

We propose that a 2-month, 10-man study of artificial intelligence be carried out during the summer of 1956 at Dartmouth College in Hanover, New Hampshire. The study is to proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it. An attempt will be made to find how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves. We think that a significant advance can be made in one or more of these problems if a carefully selected group of scientists work on it together for a summer.

(They didn’t solve everything but they did start the field of artificial intelligence, so it was still a pretty productive summer, all things considered.)

When I wrote recently about how all popular stuff now comes from just a few franchises and superstars, one of the responses on Twitter was:

But there’s more original content than ever; it’s only popular content that's been dominated by reruns. Clearly, it’s easy to rush to the conclusion that we’re close to the end of ideas, and easy to be wrong.

3. Discoveries beget more discoveries

Pessimists seem to think that the universe was born with a long list of discoveries, ordered from easy to hard, like this:

As time goes on, the thinking goes, more and more of the easy discoveries get crossed off the list, leaving only the hard ones. But knowledge doesn’t work like this at all. Every discovery opens up additional discoveries to make:

Which in turn lead to more discoveries:

And so on.

You might worry that this means discovery gets harder over time because you have to follow a chain to its end before you can add a new link. Fortunately:

4. New discoveries replace and compress old ones

We can all agree that discovering fire was pretty rad. The first humans to do it probably spent a lot of time learning which kind of kindling was best, how to nurse a spark into a flame, and what sorts of fires were best for cooking vs. heating. They must have painstakingly passed this knowledge from generation to generation, and youngsters had to practice making lots of fires before they got it right.

But in 2022, I don’t have to do any of that. When I need to cook, I turn on the stove and it makes fire for me. Or I tap a button on my phone and a human makes food for me using their own fire, then they bring it to me. When it gets cold, a fire in the basement turns on, heats up some water, and then the water flows up into the room where I live and makes me warm, too. I don’t really know how any of this works. All the fire-knowledge I’ll ever need is encoded into the innovations that surround me.

This is how science works, too. To do science, you don’t need to start with the dawn of all human knowledge and then work forward. You start with the current state of knowledge and go from there. Learning the history of science is helpful for shaping your intuitions and giving you perspective, but you don’t actually have to read Darwin, for example, to do evolutionary biology.

That’s why I’m puzzled by the claim that scientists must labor under an ever-increasing burden of knowledge. The author of that paper writes: “If one is to stand on the shoulders of giants, one must first climb up their backs, and the greater the body of knowledge, the harder this climb becomes.” This suggests that if you peek into PhD programs, you’ll see lots of students bent over their books, desperately trying to learn everything that’s come before so they can start their own projects. “I can’t do any physics yet,” you might hear them lament. "I’m only up to Huygens!” Instead, you’ll see PhD students doing original research from Day 1—and often long before. Indeed, many students start doing more interesting work once they stop looking at lots of previous work, as it finally frees them from imitating other people and searching for “gaps in the literature,” two strategies that are unlikely to yield anything interesting.

5. Things change, which means new discoveries to make

The world heats up. Stars explode. Species invade. Tectonic plates shift around. The internet upends society. Wars break out, diseases spread, and an ambiguously colored dress turns brother against brother. All of this demands explanation, and we’re the ones who get to explain it, because all the scientists of yore are dead. We probably won’t get far before a whole new deluge of facts arrive, or before we become the scientists of yore.

6. Lots of the old discoveries weren’t even right!

It turns out many scientific studies don’t work when you try them again. Lots of cancer studies may be bunk. A coding glitch may have ruined 150 chemistry papers. When the premier journal in social psychology publishes evidence of ESP, you know something is amiss.

This chaos is a ladder for young scientists. We thought all the juicy, low-hanging fruit had been picked, but now it’s back on the tree, ripe and ready for us to snatch. When we’re standing on the shoulders of giants and they start buckling underneath us, that’s our shot to become the giants.

7. New tools allow us to answer old questions

Professors delight in telling graduate students that they had to do their data analysis with punch cards, write their dissertations on typewriters, and call up strangers on the phone to ask them to participate in studies. Now you can analyze data by pointing and clicking, write your dissertation in a word processor that fixes your typos for you, and run 1,000 participants in an afternoon on Amazon Mechanical Turk. We’ve got electron microscopes and automatic pipetters and AI-powered transcription and a million other tools that allow us to do research that was impossible or unfeasible even a decade ago. So even if we’re picking the lowest-hanging fruit, we’re standing on an ever-ascending scissor lift.

Do these ideas seem that hard to you?

I’m a psychologist, and I understand that most people aren’t thinking about psychology when they fret about humans running out of ideas, and that psychology hasn’t been a formal discipline as long as the natural sciences have. Still, we’ve certainly been thinking about minds and behavior for a very long time, so if ideas get harder to discover over time, it should be pretty hard to do psychology these days.

Let me tell you: it’s not. I’ve published two papers in what most scientists consider the third-most prestigious journal in all of science. (I think journals are bad, but that’s a story for a different day.) The idea behind the first paper was, quite literally, “Do conversations end when people want them to?” The idea behind the second paper was “Do people know how public opinion has changed?” These ideas are so low-hanging you could trip over them. My conversation studies could have been run a hundred years ago. My public opinion studies could only have been run recently because we haven’t been measuring public opinion all that long. So one low-hanging idea went unpicked for a century, and another could only have been picked in the last few years.

The hard part of these ideas wasn’t coming up with them; it was picking them out of a bunch of worse ideas. According to my notes, I pitched 59 research ideas to my advisor over the first two years of my PhD. Only two of these––3%!––became research projects. Of the remaining 97% that went nowhere, only 26% were abandoned because we found out they had been done before. (There’s no guarantee we would have continued them otherwise; finding a previous paper was just a good reason to move on.) Most of the rejected ideas just weren’t that interesting.

If you’re looking for low-hanging fruit in biology and medicine, see Slime Mold Time Mold. Their contaminant theory of obesity may be revolutionary, and they’ve told me before that their work would have been almost impossible even ten years ago, because most of the sources they use have only recently been published or posted online—that is, the fruit just got lowered. Right now they’re recruiting people to eat nothing but potatoes, the fruit so low-hanging it’s literally underground.

The abundance of ideas is even more obvious outside science. If ideas were getting harder to find, you might expect that the most successful people are the ones who have discovered something really complicated. Instead, Jeff Bezos was like “what if we sold stuff on the internet” and now he’s the second-richest guy in the world. Zhang Yiming was like “what if people watched short videos,” invented TikTok, and now he’s got $50 billion. Doja Cat was like “bitch, I’m a cow” and she just won a few Billboard awards and bought a house worth $2.2 million.

Science is like elevators

All of this makes me very skeptical that ideas are getting harder to find. Nobody has ever shown direct evidence to the contrary, and I’m not even sure what that evidence would look like. Instead, most of the research on the topic—including the appropriately-titled “Are Ideas Getting Harder to Find?”—simply points out that while the number of researchers has increased, the output per researcher has gone down. I have a lot of objections to this paper, but they’re a little bit technical so I’ve put them in the Appendix below. Suffice it to say that a) their equations and measures seem dubious; b) their results are consistent with other explanations; and c) it actually seems pretty benign and unsurprising that as you add more people to a task, the output per person falls.

I don’t think we’ll actually get far by arguing over the data, because we’ve got the underlying model all wrong. The metaphors we use to describe scientific progress—foraging! mining! drilling!—all assume that each generation of scientists simply does the same thing as the previous generation, just with more complexity and precision. Our ancestors mined the surface; we mine the depths.

That doesn’t seem quite right. Democritus and Einstein both contributed to physics, but one made claims with words and the other made claims with numbers. Sigmund Freud and Lee Ross were both great psychologists, but one did cocaine and free-associated while the other put people in situations and recorded what they did. Einstein and Ross didn’t simply forage farther or drill deeper than Democritus and Freud; they did something fundamentally different.

You don’t even have to look across thousands of years to see these qualitative shifts. Twenty years ago it was perfectly acceptable to run a psychology experiment that had thirty participants in each of four conditions, drop a few outliers, do a bunch of statistical tests until one of them spits out p < .05, and publish the results. Now we know how easy it is to get statistically significant results from a few seemingly-innocuous choices like these, and publishing that same paper today would get you laughed off Twitter. I predict—well, I hope!—similar shifts will happen in fields like biology, where it will become ridiculous to make strong claims about humans from lab mice, and in neuroscience, where it will become ridiculous to put people in a big magnetic tube, look at where their brains light up, and claim you’ve discovered consciousness, or something.

Science is not like foraging, mining, or drilling, where we keep doing the same thing and it keeps getting harder. It’s more like discovering an elevator left for us by aliens. At first we have no idea how it works; we get in and push a button, and now we’re climbing dozens of floors in a matter of seconds. We excitedly calculate that, at this rate, we’ll reach outer space in a few hours!

But at some point, the elevator stops. We try everything, but we can’t get it to go any higher. Eventually we figure out how elevators work and we start building even taller elevators. Another golden age ensues—there’s taller elevators every year!

But as the elevators get taller, the engineering gets more complicated. We need more elaborate support structures and stronger materials just to keep the elevators from toppling over. Pessimists start proclaiming that we’ll never reach the stars, or even the stratosphere. The elevators simply can’t reach that high!

The way to go higher, of course, is not to build taller elevators. It’s to invent hot air balloons. Once we discover lighter-than-air travel, it’s easy to fly as high as the highest elevator, and far above it. And when the balloons can’t go any higher, the solution is helicopters. And when the helicopters can’t go any higher, the solution is rocket ships. And when the rocket ships can’t go any higher, the solution is something we haven’t invented yet. No doubt these space conveyances will be complicated, but so were elevators before we knew how they worked.

This metaphor captures an important truth that other metaphors don’t: not every paradigm shift is going to be equally useful to the average person, or equally impactful on the same dimension. People may point out that while improving elevators helps us build taller apartment buildings, inventing hot air balloons does not. “Science is providing diminishing returns to housing,” they say gravely. That’s true, but once you can build really tall buildings, limits on housing quickly become political rather than technological. For example, 40% of buildings in Manhattan could not be built today because of increasingly strict zoning requirements. (Some of them would be forbidden because they're too tall and contain too much housing!) Once we solve the scientific part of a practical problem, we can’t continue to measure scientific progress by how well we’re doing on that problem.

How to stop science

Thomas Kuhn said something like this sixty years ago. He pointed out that scientific fields tend to putter along until people start noticing problems with the prevailing paradigm (“The planets don’t move like the theory says they should!”). Eventually the problems get too big to ignore and the whole field flies into crisis, and things only settle down when someone proposes a new paradigm that can make sense of everything. Then the cycle repeats.

Two key ingredients in scientific revolutions, then, are noticing problems and taking them seriously. Kuhn assumed scientists do both of those things naturally, and maybe that was true in 1962. But it doesn’t have to be. As fields formalize, they can get very good at ignoring and suppressing problems, delaying revolutions indefinitely.

One way professional science does this is by preventing divergent thinkers from entering in the first place. The usual way of becoming a scientist is to become a professor, ideally at a wealthy institution that can furnish you with lots of science gizmos and attractive letterhead for your grant applications.

The path to that prestigious professorship has become ludicrously competitive. Harvard, the ideal first step in an academic career, accepted just 3.19% of undergraduate applicants last year, down from 7.1% in 2012. Harvard doesn't publish overall PhD acceptance rates—the next step on the academic ladder—but the engineering school does and it’s a mere 7%. Only 15%-30% of PhDs who make it through this gauntlet and still want a permanent academic job will actually get one, and very few will be at the fancy places.

To win one of these coveted positions, then, you need to do everything exactly right from your freshman year of high school onward: get good grades, garner strong recommendations, work in the right labs, publish papers in prestigious places, never make anybody mad, and never take a detour or a break. (I occasionally get emails from high schoolers begging to work in my lab so they can get their name on a paper.) Professors who got their jobs decades ago tell us it wasn’t like this. In this hypercompetitive environment, the most fervent careerists will outcompete everybody else. And fervent careerists don’t produce revolutionary science.

The other way professional science can prevent problems from accruing is by simply refusing to publish them. Pre-publication peer review has only been popular for about fifty years—the prestigious medical journal The Lancet only started reviewing papers in 1976. If your paper or grant application threatens to undermine Dr. Tweedledum’s theory, there’s a good chance Dr. Tweedledum is going to review it, and they’re not inclined to be kind. Everybody knows this, of course, so they don't even try. You need publications and grants to survive, so it’s much better to work on something you know will be publishable at the end. This also rules out revolutionary science.

Professionalized science, then, may force us to keep building elevators even though we can’t get them to go any higher. Weirdos with crazy schemes for hot air balloons simply don’t get jobs; after all, they don’t even have a degree in elevators! Anyone lucky enough to stay in the pipeline has to apprentice under an elevator-builder, so building elevators is all they’ll ever know. Besides, everyone knows that you can’t get helicopter research published in the Journal of Elevators, and the National Elevator Foundation will never give you money to build one. And our esteemed elders assure us that elevators have an excellent track record and the recent slowdown in elevator progress is only because it’s harder to build taller elevators, so really what’s needed is more elevator funding. But even then, they warn, it won’t ever be possible to reach the stratosphere, let alone outer space. We must resign ourselves to looking for discoveries in the sixth place of decimals.

A refuge for cowards

“Ideas are getting harder to find” is a pretty bleak thing to believe. It says, “Look around the world. This is pretty much as good as it gets; the returns start diminishing from here. All the problems you see are unlikely to be solved anytime soon, so you better get used to them.” Why would anyone agree to such a thing without putting up a fight?

I think, deep down, part of us wants to believe it, because pessimism is really a clever excuse for cowardice. If you believe people are evil, you can be excused from ever trying to befriend them and maybe being rejected. If you believe the world is stacked against you, nobody can blame you for always playing it safe. And if the easy ideas have already been taken, you can’t be expected to come up with anything new.

I don’t blame people for using pessimism as an opiate; we could all use some relief right now. Wages are stagnant, inequality is rising, and if you don’t have a house yet, good luck buying one. The government is sometimes run by people you dislike; the rest of the time it’s run by people you hate. People are dying of preventable diseases while other people are launching cars into space. Everything’s on fire and nobody’s doing anything about it. This doesn’t seem like a world where much is possible, so why not lower your expectations?

But if we want a better world, we have to believe it’s possible to create one. And that takes courage, because if you truly believe in a better world, you have to do something about it. You don’t get to smugly smirk as the ship sinks; you have to start pumping the water out. Smirking seems easier than pumping at first, but pumping turns out to be really fun. You start to feel useful. You make friends with the other people trying to keep the boat afloat. You stop caring about all the people who say what you’re doing will never work. Ultimately, pumping is way easier than smirking, and it feels better too. Optimism cures pain; pessimism, like painkillers, merely dulls it.

The way I see it, if you want to write a song the world hasn’t heard before, you have two choices. You can spend your time calculating how there’s only a finite amount of different melodies, so eventually humans will run out of songs to write, so why bother. Or you can pick up a guitar and play.

APPENDIX: the technical objections

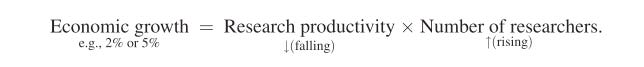

This paper by Bloom, Jones, Van Reenen, and Webb claims that ideas are getting harder to find. They summarize their argument like this:

There’s something very strange about this equation. It implies that the only way to get negative economic growth—that is, a recession—is for either research output or the number of researchers to be negative, which seems unlikely to happen. But if both are negative, economic growth is positive! Clearly, the relationship between researchers, productivity, and economic growth is a lot more complicated, and that makes me doubt that economic measures do a good job capturing the progress of science.

I’ve got three other gripes with this paper. First, it casually swaps correlation and causality, assuming that increasing numbers of researchers have been necessary for maintaining the same level of productivity. For instance:

...this growth has been achieved by engaging an ever-growing number of researchers to push Moore’s Law forward. In particular, the number of researchers required to double chip density today is more than 18 times larger than the number required in the early 1970s. At least as far as semiconductors are concerned, ideas are getting harder to find.

This isn’t evidence that making better computer chips required additional researchers. We don’t know what the growth rate would have been if we had kept the number of researchers the same.

My second gripe is that the theory seems to explain too much. The authors find research productivity slowing down everywhere they look, which they interpret as robust evidence for “ideas getting harder to find.” Even if ideas really were finite and get harder to find over time, should we really expect that to be happening in every single field in this exact time period? There isn’t a single discipline where some breakthrough led to a period of increased productivity? Some of these fields, remember, are way younger than others. Shouldn’t we expect some of them to still have lots of easy ideas left, and others to have fully entered their twilight stage, where there are only extremely hard ideas remaining? Science has been going on for a while, so why should this be happening only in our lifetimes, and not fifty years before or after? This seems like a pretty extraordinary coincidence.

Which brings me to my final gripe: the paper interprets any drop in research productivity as “ideas getting harder to find.” But that’s just one explanation; there are lots of plausible alternatives. Ben Southwood suggests it may be because industrial labs were replaced with less efficient academic departments, or because geniuses don’t go into research anymore, or because lead poisoning is making us dumber. Jay Bhattacharya and Mikko Packalen think it’s because scientists have become obsessed with citations, which leads them to do incremental rather than revolutionary work. They illustrate their theory with this extremely charming figure:

These explanations sound plausible to me. And I’d go even further. Above a pretty low threshold, we should expect per capita productivity to drop whenever we add more people.

Say you get two guys to remodel your kitchen, and they tell you they can do it in two weeks. “Perfect,” you say. “I’ll just hire 2000 guys, and the job will be done in about 20 minutes!”

That won’t work, of course, for all sorts of reasons. (Though it does make a good episode of Nathan for You.) You can’t fit that many people in your kitchen—even 20 guys would be bumping into each other all the time. It’s unlikely that the 2000th contractor you hire is going to be as good as the first. Working with that many people requires lots of management and planning. The work can’t be done entirely in parallel—you can only hang the light fixtures after the wiring is done, for example. And some things simply can’t be sped up: paint just takes a while to dry, no matter how many people are waiting around.

Some of the same problems may arise in science. It takes a lot of work to manage lots of researchers, which is why people who lead big labs today spend much of their time being CEOs, fundraisers, and human resources managers rather than scientists. A few individuals may disproportionately drive progress, so adding researchers might actually decrease progress per capita. Some fields may be stymied until progress is made in other fields. And some science simply can’t be sped up: humans age, bacteria multiply, and light waves travel at the same rates no matter how many people are studying them.

Plus, researchers have lots of perverse incentives that remodelers don’t. The remodelers all want the same thing and are willing to follow the same plan and take direction from a supervisor. Researchers, on the other hand, compete with each other. They might steal each other’s ideas, or purposefully tank each other’s papers and grant proposals. Their jobs depend on them looking productive, so they pump out pointless papers to lengthen their CVs. These problems only grow as researchers multiply and the field gets more competitive.

That’s why I wouldn’t find it very surprising if per capita research output has dropped as the number of researchers has increased. It isn’t convincing evidence that ideas are getting harder to find. I still think we have a big problem, but it’s a solvable social problem, rather than an unsolvable scientific problem.

Good thoughts overall! A few issues I had:

1. I wouldn't say heliocentrism, germ theory, or CTs are obvious in retrospect. I actually just wrote a whole blog post on how germ theory would be impossible without microscopes (https://trevorklee.substack.com/p/a-medical-thought-experiment), and I don't think anyone reading this blog would be able to prove heliocentrism without Googling how to. Meanwhile, corporate marketing still hasn't figured out RCTs, even though there's a direct financial incentive for them. Also, the concepts (and math) embedded in E=MC^2 are, again, beyond the ken of almost anyone reading this blog.

2. There's a difference between "we're running out of ideas" (which is silly) and "all the low-hanging fruit has already been picked".

3. There's a difference between discoveries in physics, which are supposed to be true for everywhere at all times, and discoveries in psychology, which can't make that assumption (i.e. I'm not sure cavemen abided by the same conversational conventions we do).

4. I think bringing in Thomas Kuhn earlier would have helped. There's always a flood of "easy" papers at the beginning of a paradigm shift, as the basic elements of the paradigm get fleshed out and improved. This also is often true when new instruments get developed.

The article "ideas are harder to find" is based not on ideas but on the final economic implementation of ideas that impact the economy (where they get the total factor productivity numbers). That is Ideas + economic feasibility + resource availability ($$$) + permissions (regulatory) + defeating political attacks from those who fear or are threatened by the innovation. Any one of these series steps can be the rate-limiting step. Even if you have an infinite number of scientific ideas, the rate of economic growth and apparent innovation will be limited by one of the other factors.

The authors also misuse R&D spending by companies to obtain progress as a metric for saying that R&D productivity is declining without noting that the actual areas being researched are changing over time. In an evolving technology area like chips or GMO, the researchers borrow from other areas of technology, which at that moment, are more advance than their new area. The cost of the R&D in that spill-in area of technology is on someone else's books. For example, initial semi-conductor manufacturers used clean rooms from hospital suppliers, vaccum systems from the space program, everything from saws to grinding/polishing systems from metallurgy, etc. When the feature size of chips evolved to the point where a virus looms as a mountain of impurity, they were compelled to do R&D on clean rooms. That technology advance then spilled-back to hospital clean room technology. The required R&D was then on the Chip companies books, being counted as declining R&D productivity.

The spill-in and spill-out of R&D benefits for modern multi-factor innovations is very complex and results in areas like biotech obtaining a huge spill-in of technology from computer/chip area. For analyzing DNA by looking at a color of light for each letter being added (only 4 letters) onto the sample DNA they needed holes in the 70 nm range that utilized a spill-in from semi-conductor technology. Some of these biotechnology areas are growing even faster than Moore's Law as they adapt the R&D from both semi-conductors and computers.

Meanwhile, the new semi-conductors with 2 nm line widths need very hard UV light close to soft X ray light sources. Light sources use a small drop of tin hit with a laser beam to a high temperaure plasma state, but this technology probably has a massive R&D spill-in from the billions spent on inertial confinement fusion, where a small pellet containing hydrogen isotopes is compressed to temperatures of our sun for fusion, also using converging laser beans. A few billion in R&D spill-in is good.

The "low hanging fruit" mental model or even the "tree of knowledge" model don't work very well. The very low hanging centuries old fruit of Maxwell's equations, General Relativity, and the Schrödinger equation has long gone to seed and produced trees whose branches meld with every branch of the founding tree of human knowledge they cross. When every point of crossing branches effectively starts a new tree, the tree model ceases making sense, and network models more useful images. Your i-phone utilizes Maxwell's equations, General Realtivity and Quantium mechanics to make it work. Those are not elements of Apple's research budgets.

As the overall "knowledge network" grows, more possible ideas and technologies are created at a rate much faster than the economies in the deleveloped world economies (probably up around 10%/yr). Clearly, we can conclude that the slow step controlling economic growth is not lack of "Ideas". Science has a meaning of the word "idea" that excludes economic/political factors outside the idea itself.

The regulatory and permission areas are expanding rapidly and getting slower. Imagine, if Apple had to build the i-Phone in California and needed 100,000 employees in a new facility utilizing hazardous materials, highways, etc. This is a state where trying to get permission for desalinization of seawater, in water-short Southern California, has proved impossible after decades of trying and tens of millions of dollars spent in the effort. Meanwhile Israel now obtains 50% of their water supply from desalization using RO technology, that was developed at UCLA in the '50s. Good ideas abound. It is not a lack of ideas, but obtaining permission to make use of the ideas which is the sadly limiting factor which is preventing the United States from fulfilling its potential, to the detriment not only of our country, but of the world.

To me, the whole "idea shortage" concept is a convenient excuse to blame innovation stagnation on something other than the true "rate controlling" step, which has been put into place by government regulation. With the web of knowledge now being so large, people like the authors of this article (Bloom, Jones, Van Reenen, and Webb) may not have the basic knowledge required to see the real complexity and only see what is in the sub-network of economics. Just viewing the economics (accounting numbers), we can get accounting artifacts that say R&D productivity is decreasing when the R&D is just covering more breadth.