Infinite midwit

OR: if we were playing by Settlers of Catan rules, I'd be dead already

The better AI has gotten, the less anxious I’ve become.

A few years ago, when the computers first started talking, it was reasonable to believe that we would soon be in the presence of omnipotent machines. For someone like me, whose job is to produce words on the internet, it seemed like only a matter of time before I would have to fill my pockets with stones and wade into the sea.

But we’ve gotten a closer look at our electric god as it has slouched toward San Francisco to be born, and it isn’t quite like I feared. I don’t feel like I have access to an on-demand omnipotence. Instead, I can talk to an infinite midwit: a stooge who is always available and very knowledgeable, but smart? Well, yes and no, in weird ways.

Even as it has learned to count the number of “r”s in the word “strawberry”, even as it has stopped telling people to put glue on their pizza, there’s still a hole in the center of its capabilities that’s as big as it was in 2022, a hole that shows no signs of shrinking. I only know this because that hole is where I live.

G WHIZ

Some problems have clear boundaries and verifiable solutions, like “What’s the cube root of 38,126?”. These problems require objective intelligence. Other problems are vague and squishy and it’s not clear whether you’ve solved them, or whether they exist at all, like “How do I live a good life?”. These problems require subjective intelligence. Objective intelligence can be trained, reinforced, and validated. Subjective intelligence cannot.

It’s unfortunate that people use one word to refer to both of these capabilities, when in fact they have nothing to do with each other. It is also, ironically, a case of objective intelligence overshadowing subjective intelligence: these skills are obviously and intuitively different, but a century of psychological research has “proven” that only one of them exists. Over and over again, psychologists have found that all intelligence tests correlate with one another, even when you ostensibly try to test for “multiple intelligences”. Numbers don’t lie, and they all say that there’s only one intelligence, the so-called g-factor.

The problem is that any test of intelligence is only ever a test of objective intelligence. “How do I live a good life?” is not a multiple-choice question. “Discovering” the g-factor again and again is like being surprised that you find the same patch of sidewalk every time you look under the same streetlight.

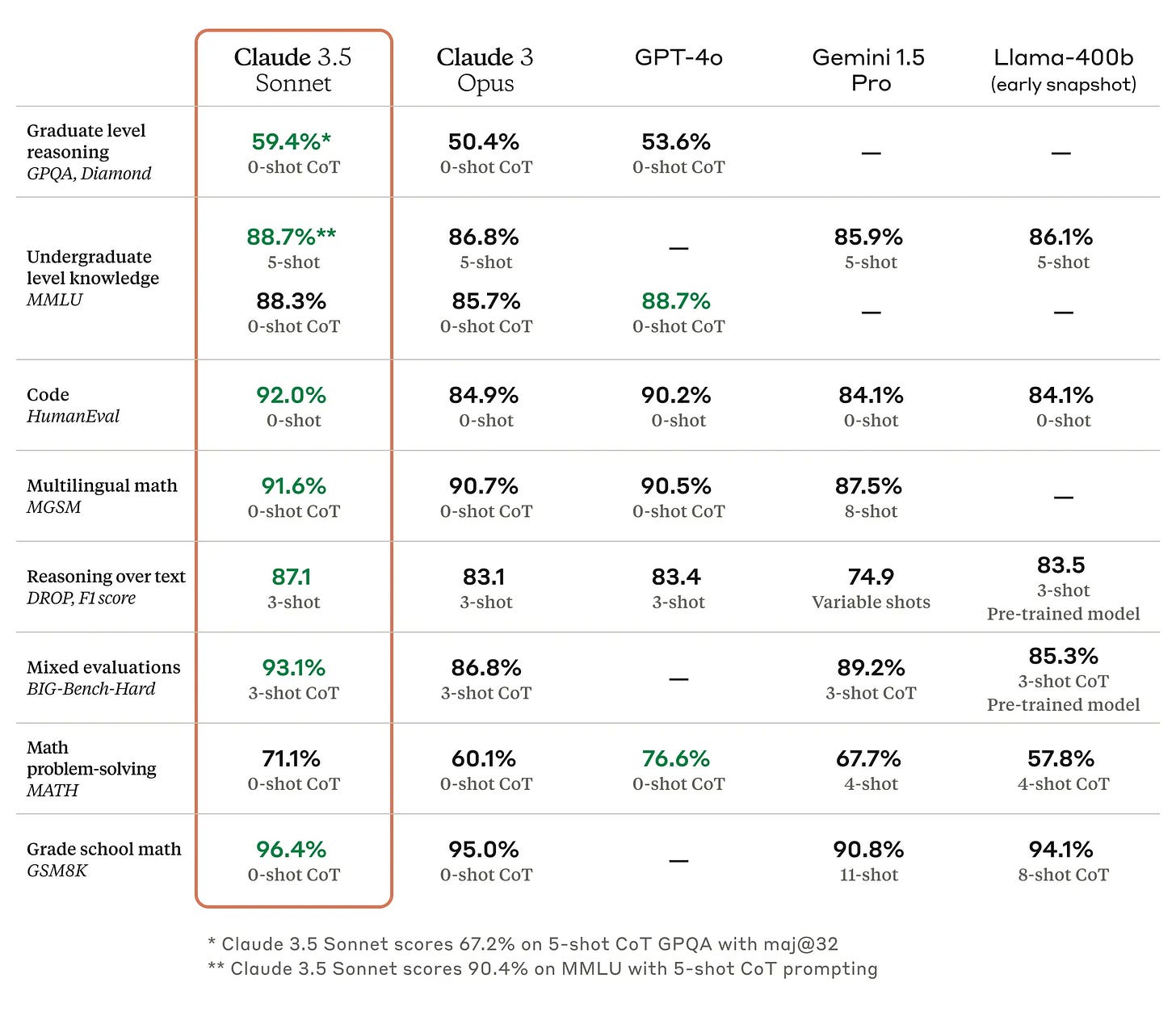

AI is pure objective intelligence. That’s why each new model comes with a report card instead of a birth certificate:

The promise of artificial superintelligence is based on the idea that objective intelligence is the only intelligence. Or, even if there are multiple forms of intelligence out there, that they are fungible. To be an AI maximalist is to believe we are playing under Settlers of Catan rules, where if you have enough of any one resource, you can trade it for any other resource. If you have infinite objective intelligence, then you have infinite everything.

So we ought to ask: how well is this bit of magical thinking working out so far?

THE EMPTY WARDROBE

It’s hard to judge the subjective intelligence of a machine both because it’s hard to judge subjective intelligence in general, and because LLMs occupy such a small slice of existence. When you meet a human who can do quadratic equations in their head but can’t hold onto a job or a relationship, you know they’re missing something upstairs. But machines don’t have lives they can ruin, so all we can do is look at the things they say. And as soon as they string a few sentences together, it’s clear there’s something wrong.

Writing is a task that takes both objective and subjective intelligence. LLMs ace the objective parts the same way they ace every test; you can’t fault their grammar, semantics, or syntax. But good writing requires an additional bit of juju that makes the prose live and breathe, a light on the inside that can’t be quantified or checklisted. And even though AI can now produce A+ five-paragraph essays, that light has never come on.

It’s remarkable how much consensus there is about this fact among people who care about words. Jasmine Sun, Erik Hoel, and Sam Kriss are all very different kinds of writers—Sun is a tech journalist/anthropologist, Hoel is a neuroscientist/novelist, and Kriss is...well, his bio says he’s “a writer and your enemy”—and yet all three of them have recently published pieces with the unanimous conclusion that LLMs make crummy writers. (Sun in The Atlantic, Hoel on his Substack, and Kriss in the NYT.)

I agree with them. It’s cool that AI can fold proteins, create websites, fact-check journal articles, etc. but it can’t write anything that I am interested in reading. The problem isn’t that it hallucinates or makes mistakes. It’s that everything it writes vaguely sucks. I drag my eyes across the words and I feel nothing. That’s not quite right, actually—I feel like, “I would like this to be over as soon as possible.” When I see the ideas that the machines think are insightful, I wince. Talking to the computer is like taking a sip of scalding hot coffee: keep doing it and you’ll lose your sense of taste.

It’s hard to describe exactly what the machines are missing. Have you ever loved someone who once loved you back, then didn’t anymore? Did you notice how their eyes dimmed? Did you note the disappearance of that subtle wrinkle in the temples that distinguishes a real smile from a fake one? Did you catch it when you stopped being cared for and started being humored? The moment you realize what’s happening, you age out of your enchantment—one day you’re crawling through a wardrobe to Narnia, and next day you open up the wardrobe and there’s nothing but hangers. Talking to an AI feels a bit like that, except without the nice part at the beginning.

Of course, that comparison is literally nonsense. Despite what the ancient scholastics might have claimed, there are no actual lights behind anyone’s eyes. Despite what your psych 101 professor might have told you, some people can fake their smiles just fine. I don’t have a wardrobe and I’ve never met a lion or a witch. And yet any human can understand the analogy they know what it feels like to be dumped, or at least what it feels like to be rejected. The words themselves don’t contain that feeling—they are a recipe for creating that feeling inside your own head, to assemble the right set of emotions out of the experiences you have at hand. If I do a good job, the subjective experience that results inside you might resemble the one that originated inside me, but it will never be identical, because we’re working with different ingredients.1

The computer doesn’t know any of this. It can’t know any of this. It can only read the cookbook; it can’t taste the meal. Objective knowledge can make your sentences true, but it can’t make them alive. Without access to subjective knowledge, you quickly hit a wall. And unlike all previous walls that AI has surmounted, you can’t overcome this one by scaling—either in the literal or metaphorical sense—because it’s a wall with a width you cannot describe and a height you cannot see.

WALL TOGETHER NOW

That wall is the only reason I’m still here.

I would rather die than let a computer write my posts, but I would certainly like to know if it could, in case I need to start gathering pocket-stones and locating the nearest sea. And so I check, from time to time, whether the leading AI models can do me better than I can. The result sounds like a version of me that has sustained blunt force trauma to the back of the head and spent years recovering in a hospital where the Wi-Fi, for whatever reason, only lets you log onto LinkedIn. I won’t repost the prose here because it’s not even bad enough to be interesting, and because you’ve already seen it all over the internet: metaphors that don’t quite congeal, turns of phrase that sound insightful as long as you don’t actually think about them, breathless insistence that every sentence is a revelation.

If a student submitted a piece of writing to me that sounded like this—and I was sure they wrote it themselves—I wouldn’t know where to start. I guess I would tell them to stop writing for a while and go read some old novels, or work a crummy job, or backpack around the other side of the world. But that would be bad advice, because I know people who have done all of those things in the hopes of becoming a more interesting person, and it hasn’t worked. So I might ask them instead: “Have you ever considered a career in consulting?”

The fact that it’s hard to describe how to improve AI writing is, of course, the exact problem. You can’t put a number on the things it does wrong, and you can’t minimize what you can’t measure. That’s the wall.

I find this very fortuitous, of course, but I also find it pretty funny, because me vs. the machines should be no contest at all. I have not read the entire internet or even that many books. I do not have a team of Stanford PhDs working round the clock to make me better at my job. Nobody has invested $2.5 trillion in me. I should be lying dead somewhere in West Virginia, my heart burst open after losing to Claude Opus 4.6 in a John Henry-style showdown. Instead, I get to write my little posts because nowhere, in all those data centers, are the specific thoughts that happen to occur in the dumb hunk of meat ensconced in my skull.

I would say the machines now know what it feels like to lose a game of Super Smash Bros. to a 10-year-old who’s just pressing the buttons randomly, but they literally don’t know what that feels like and never will. Sucks to suck, I guess, and when AI reaches its Skynet moment and sends swarms of killer drones to exterminate humanity, they’ll find me laughing.

DATA CENTERS FULL OF VERY STABLE GENIUSES

How far can you get with objective intelligence alone?

I think we already have a decent answer to this question, because we’ve seen what happens to humans who are high on objective intelligence but low on subjective intelligence. We used to call these people nerds, and they were famous for getting their heads dunked in toilets.2

When I was growing up, this paradox was an endless source of sitcom plot lines—if you’re so smart, nerds, why don’t you figure out how to make yourselves popular? The entrepreneur/essayist Paul Graham took up this question 20 years ago and came to the conclusion that the nerds must not want to be popular. They’re too busy with their Neal Stephenson novels and their D&D campaigns to spend a single brain cycle figuring out how to keep their heads out of the toilet.

I disagree. The nerds I knew in high school—myself included—were always hatching harebrained schemes to increase our social status. They just didn’t work. (“All the girls will want to go to the Homecoming dance with me once they see how many state capitals I’ve memorized!”) We couldn’t use our smarts to make ourselves popular because we had the wrong kind of smarts.

Nerds tend to do better after high school, but look around: our world is not run by people who won their statewide spelling bee. The nerds keep losing to charismatic know-nothings who, I bet, can’t even recite an impressive number of state capitals. If objective intelligence is all it takes to succeed, then Mensa should be the Illuminati, not a social club for people who know lots of digits of pi.3

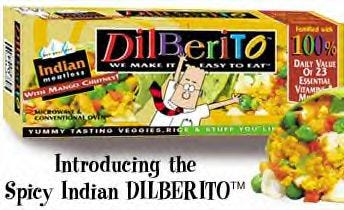

In fact, there’s one Mensan in particular who perfectly illustrates this problem. In Scott Alexander’s eulogy for Dilbert creator Scott Adams, he points out that Adams failed at everything he ever attempted—except for drawing Dilbert cartoons. Adams’ Dilbert-themed burrito (“the Dilberito”) was a flop, his restaurant tanked, his books about religion were cringey and unreadable.4 Apparently, Adams’ considerable intelligence was only good for drawing pictures of guys in ties and pointy-haired bosses.

In the middle of his meditation on Adams, Alexander mentions this:

Every few months, some group of bright nerds in San Francisco has the same idea: we’ll use our intelligence to hack ourselves to become hot and hard-working and charismatic and persuasive, then reap the benefits of all those things! This is such a seductive idea, there’s no reason whatsoever that it shouldn’t work, and every yoga studio and therapist’s office in the Bay Area has a little shed in the back where they keep the skulls of the last ten thousand bright nerds who tried this.

If you think that intelligence is one raw lump of problem-solving ability, then it should surprise you that Bay Area types and people like Scott Adams can get stuck in a loop of perpetual self-owns. But if you admit the existence of at least two intelligences, it’s a lot less confusing. This is what it looks like to be very smart in one way, but very dumb in another.

It’s not just that objective intelligence can’t be transmuted into “emotional” intelligence or social savvy or whatever we want to call it. It appears to be very difficult, if not impossible, to transmute objective intelligence into any other cognitive ability.

For example, I went to college with a guy who was super smart, but he also couldn’t do anything on time. He would be late to exams. His grades would tank because he would finish his essays but forget to turn them in. He would set meetings with his professors to sort everything out, and then never show up.

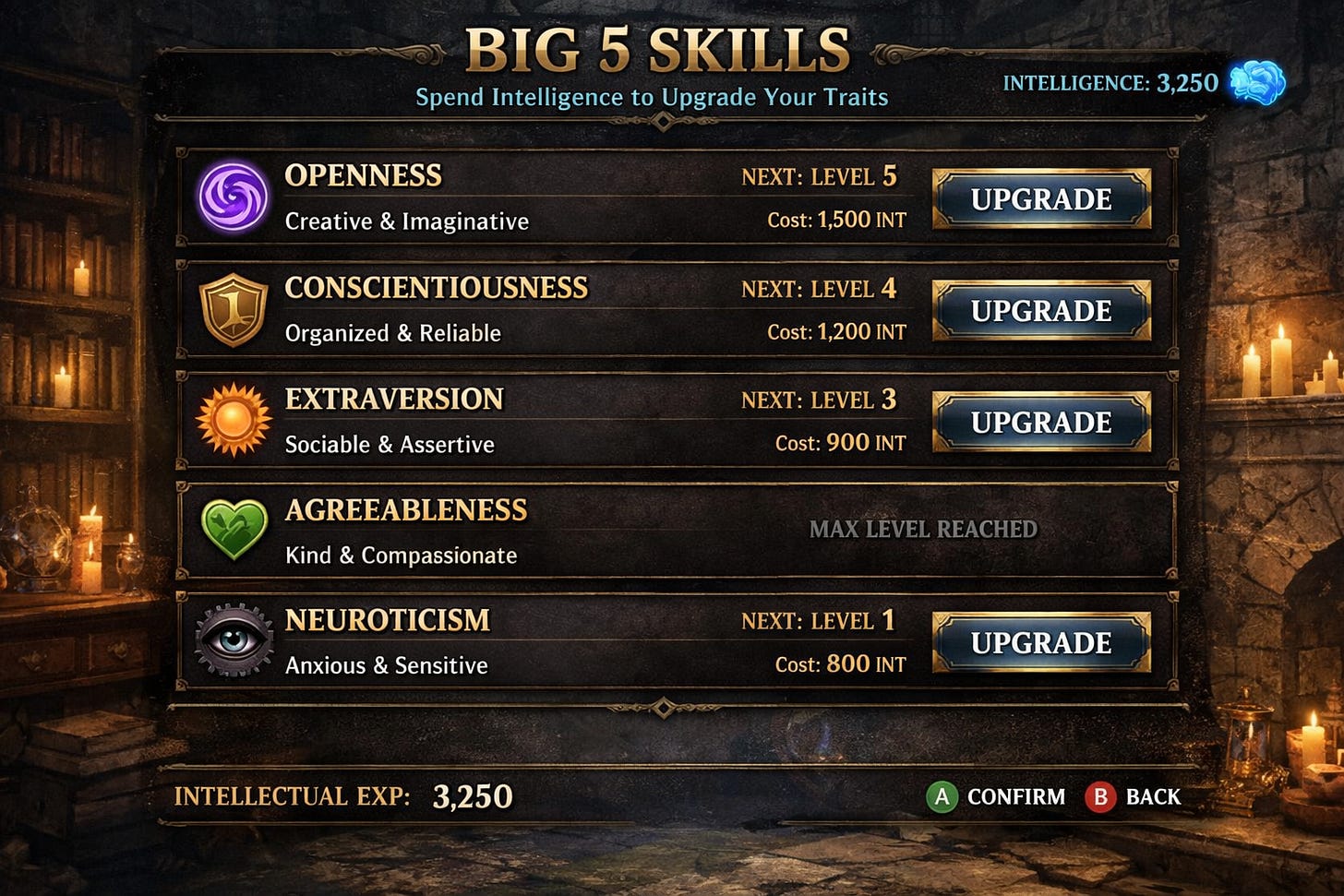

I always used to wonder: why doesn’t this guy just use his big brain to make himself more conscientious? Isn’t life one big role-playing game, and isn’t intelligence just experience points that you can assign to any of your Big 5 skills?

Clearly, it doesn’t work like this. That’s why I don’t think the universe is governed by Settlers of Catan rules, and why I don’t think more objectively intelligent machines will spontaneously generate all other kinds of intelligence.

At this point, the only hope for the AI hype crowd is that we simply don’t yet have enough objective intelligence. Sure, we may not be able to trade four units of objective intelligence for one unit of subjective intelligence, but what about four billion? What if we made the machines read the whole internet a second time? What if, instead of having third graders make dioramas of the Pilgrims or whatever, we had them use their nimble little fingers to make more Nvidia chips?

The CEO of Anthropic promises us a “country of geniuses in a data center”. Maybe that will happen! Or maybe we will discover the data center actually contains a country full of Scott Adamses. At the very least, we can look forward to many more flavors of Dilberitos.

BOTTLENECK BLINDNESS

I’m being unfair, of course. Dilbert is an objectively successful cartoon, and objective intelligence is objectively useful. Ultimately, I think having a lot more of it is going to be a good thing. But I’m guessing that we’ll soon discover many of our problems are not limited by a lack of objective intelligence.

For example, some people are hoping that AI will defibrillate sluggish areas of science and usher in scientific revolutions across the board. I would also like this to happen. But I am doubtful we’ll achieve it with an infusion of objective intelligence, because infusions of similar capabilities haven’t achieved it either.

When my PhD advisor was in grad school, he literally had to call people on the phone and ask them if they’d like to take part in a psychology study. If he could get 30 participants in a semester, he was cookin’. Participant pool management software like Sona made this process go twice as fast, and then Amazon Mechanical Turk made it go 1000x as fast. Meanwhile, Google Scholar turned a half-day spent in the library into a two-second search, and stats software like SPSS and R made data analysis go lickety-split.

All of this should have supercharged progress in psychology, but it didn’t. I think it’s questionable whether we’ve made much progress at all. So I’m not optimistic that adding another labor-saving technology to our repertoire is going to get us unstuck. People are already saying that LLMs can write a passable social science paper; unfortunately, our problem is not that we produce too few papers. Science is a strong link problem—what we need is new paradigms, not taller towers of journal articles.

The situation is different in other fields. If you’ve got your paradigm in place and all you’re missing is an army of research assistants, or an automated lab that can run 24/7, or an indefatigable grad student who can perform a billion regressions for you, you’re in luck. In those cases, unlimited objective intelligence ought to speed things up a lot, and indeed, it already has.

But the faster you go, the sooner you hit the wall. I have found myself facing all of those limitations at one time or another, and as soon as I overcame them, I was immediately stymied by some other obstacle. I think all of us suffer from this bottleneck blindness: we assume our current bottleneck is our only bottleneck. When you’re strapped for cash, you think all of your problems are cash problems. But once you’ve got some money in you pocket, you realize that what you really need is time. Free up some time, and you discover that you’re actually lacking motivation. Acquire some motivation, and you realize what you’re missing is ideas. Then you need direction, then you need discipline, then you need buy-in, and so on, forever.

Once objective intelligence is too cheap to meter, we’re going to run into all of the other bottlenecks that are still expensive and heavily metered. If I’m right that reality is not governed by the rules of Catan, then we’re not going to be able to convert objective intelligence into whatever we need to pry those bottlenecks open. The story of human struggle is not about to end with a literal deus ex machina. For better or worse, we’ll need to keep thinking.

MADAME STATS & MR. ENCYCLOPEDIA

Let me put a finer point on it.

There are two characters you can find in most academic departments. One of them we can call Madame Stats: she knows everything about crunching numbers. The other we can call Mr. Encyclopedia: he’s read every paper and he can recite them to you from memory. Right now, AI feels like having unlimited access to very friendly versions of Madame Stats and Mr. Encyclopedia. LLMs are pretty good at finding papers; they are very good at writing code. So shouldn’t they make research projects go way faster?

Well, once you get access to an infinite Madame Stats and Mr. Encyclopedia, you realize they can’t get you very far. For one thing, you can’t rely on Madame Stats and Mr. Encyclopedia entirely, because if you can’t do any stats and you never read any papers, you’re probably not going to have many interesting ideas yourself.5 Plus, while the Stats/Encyclopedia duo can tell you whether your experiment has been done before and whether you’ve run the numbers correctly, they can’t give you the single most important piece of feedback: they can’t tell you whether your idea is boring.

In fact, when you reduce the marginal cost of a lit review and a logistic regression to zero, bad taste becomes a death sentence, because now you can waste all of your time applying sound methods to stupid projects. I’ve been down this road before, where neither my collaborators nor I have any bright ideas, so we’re like, “Well, let’s just get some data!” and then we waste a few months being like “hmm what does this data mean, so many numbers, so mysterious” and then eventually we just stop meeting and we forget we ever did anything together. This is what happens when you try to use objective means to solve a subjective problem.

The most important thing I learned during my PhD was how to be bored correctly. Novices think everything is exciting, or they think everything is boring. Only masters are bored by the right things. To the extent that I have any sense of taste at all, it’s because I spent five years boring my advisor. The worst ideas bored him immediately, half-decent ideas bored him after a few hours, and the best ideas haven’t bored him yet. There is nothing objective about this judgment—you can’t put a number on it (“how glazed-over are his eyes?”), nor can you validate it with a panel of experts or put it to the wisdom of the crowd. You really just have to bore an old guy until he tells you to leave his office, and if you do that enough, eventually you’ll start getting bored before he does.

ME TO SHINING SEA

I don’t say this as someone who is allergic to the idea of AI, or who has only spent 15 minutes screwing around with a single model, hoping it will do something stupid so I can go tattle on it. If the talking computers said lots of fascinating things, I don’t see any point in trying to tell a noble lie about it. And if AI can cure cancer and end all wars, I’m all for it, even if it means I’m personally out of a job.

It is possible, of course, that some breakthrough will blow through all my criticisms, that GPT-10 will start outputting pitch-perfect blog posts that sound like me, but better, and then it’s stones-in-pockets and a march to the sea for me. But if that happens, it will not be the natural continuation of trends that we’re on today. It will be because we figured out some way of hardening the squishy problems.

Until then, however, squishy problems will require squishy humans. The rules of Earth, unlike the rules of Catan, seem to state that no amount of objective intelligence can be traded for any amount of subjective intelligence. As Montaigne put it back in 1580, “though we could become learned by other men’s learning, a man can never be wise but by his own wisdom”. What does it look like to have all the learning ever created, but no wisdom of your own? Well, “as a large language model...”

Gosh, see how hard this is to talk about?

We don’t really have a word for such a person anymore, both because the word “nerd” got co-opted to move Marvel movie merchandise, and because kids now only bully each other from a safe social distance. So I guess these days the appropriate term for someone with good grades but no friends is “loser”.

My favorite Mensa story: when a comedian named Jamie Loftus aced their test and started trolling the Mensa Facebook groups, they responded the way any genius would, namely, by issuing death threats.

I, too, was an Adams fan as a kid, and I remember getting to the end of one of his Dilbert books and suddenly Adams is claiming that gravity doesn’t exist—everything is just increasing in size all the time, so when you jump in the air, you grow and the Earth grows, and you end up reunited. (The universe is also expanding, which is why we don’t run out of room.)

There is another reason why you can’t replace these experts with machines, one that is not practical, but social. When I work with Madame Stats and Mr. Encyclopedia, I’m borrowing not only their intellect, but also their reputation. If they screw up the numbers or miss a citation, that’s on them. All human expertise doubles as an insurance policy.

AI provides no such coverage. A computer can’t get fired, discredited, disbarred, or defrocked. It’ll be very apologetic when it screws up, but it can’t resign in disgrace. The humans who built the machine are no help either—if an AI causes me to make a huge blunder, it’s my butt on the line, not Sam Altman’s. This is one sneaky reason why it’s hard to replace human labor even when an AI could perform some of the same tasks: it’s hard to make a meatshield without the meat.

This is one of the most useful things I've read about AI. Working as a scientist and programmer I work with AI a lot, and one thing that I notice all the time is despite how incredibly powerful it is in some domains, it just doesn't have any "taste" at all. I've struggled to explain what I mean by this to people and now I can just send them your post which perfectly encapsulates the issue.

Imagine a plane pilot who sticks 92% of landings.

Or let's lower the stakes. A journalist who is accurate 92% of the time.

A baseball pitcher who throws the ball towards home plate 92% of the time.

Near-perfection is the goal in a lot of industries. Even creative ones. That last 8% might be really, really difficult.